Abstract

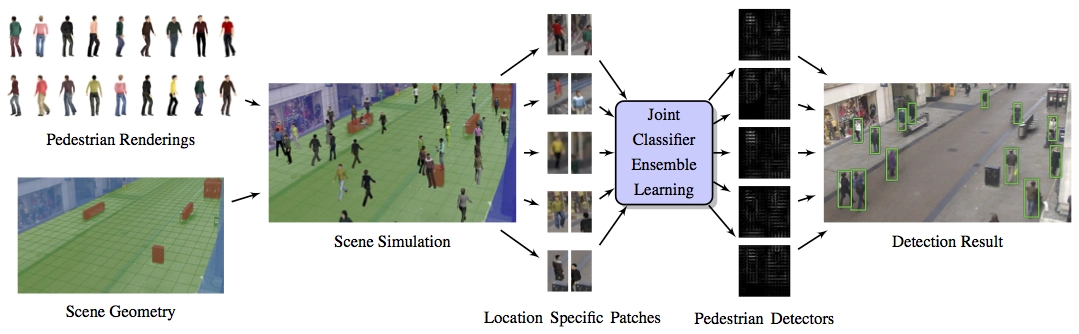

We consider the problem of designing a scene-specific pedestrian detector in a scenario where we have zero instances of real pedestrian data (i.e., no labeled real data or unsupervised real data). This scenario may arise when a new surveillance system is installed in a novel location and a scene-specific pedestrian detector must be trained prior to any observations of pedestrians. The key idea of our approach is to infer the potential appearance of pedestrians using geometric scene data and a customizable database of virtual simulations of pedestrian motion. We propose an efficient discriminative learning method that generates a spatially-varying pedestrian appearance model that takes into the account the perspective geometry of the scene. As a result, our method is able to learn a unique pedestrian classifier customized for every possible location in the scene. Our experimental results show that our proposed approach outperforms classical pedestrian detection models and hybrid synthetic-real models. Our results also yield a surprising result, that our method using purely synthetic data is able to outperform models trained on real scene-specific data when data is limited.