Encrypted Machine Learning

Bridge2AI Seminar

Michigan State University

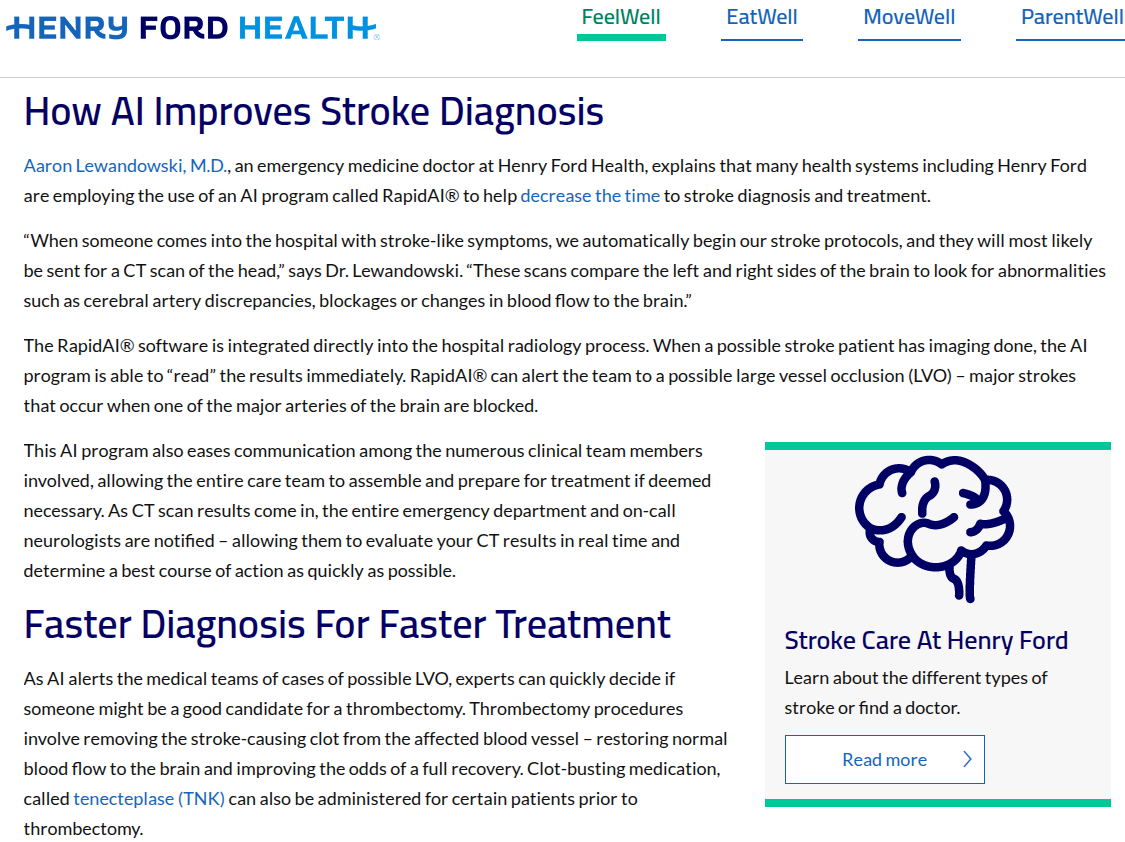

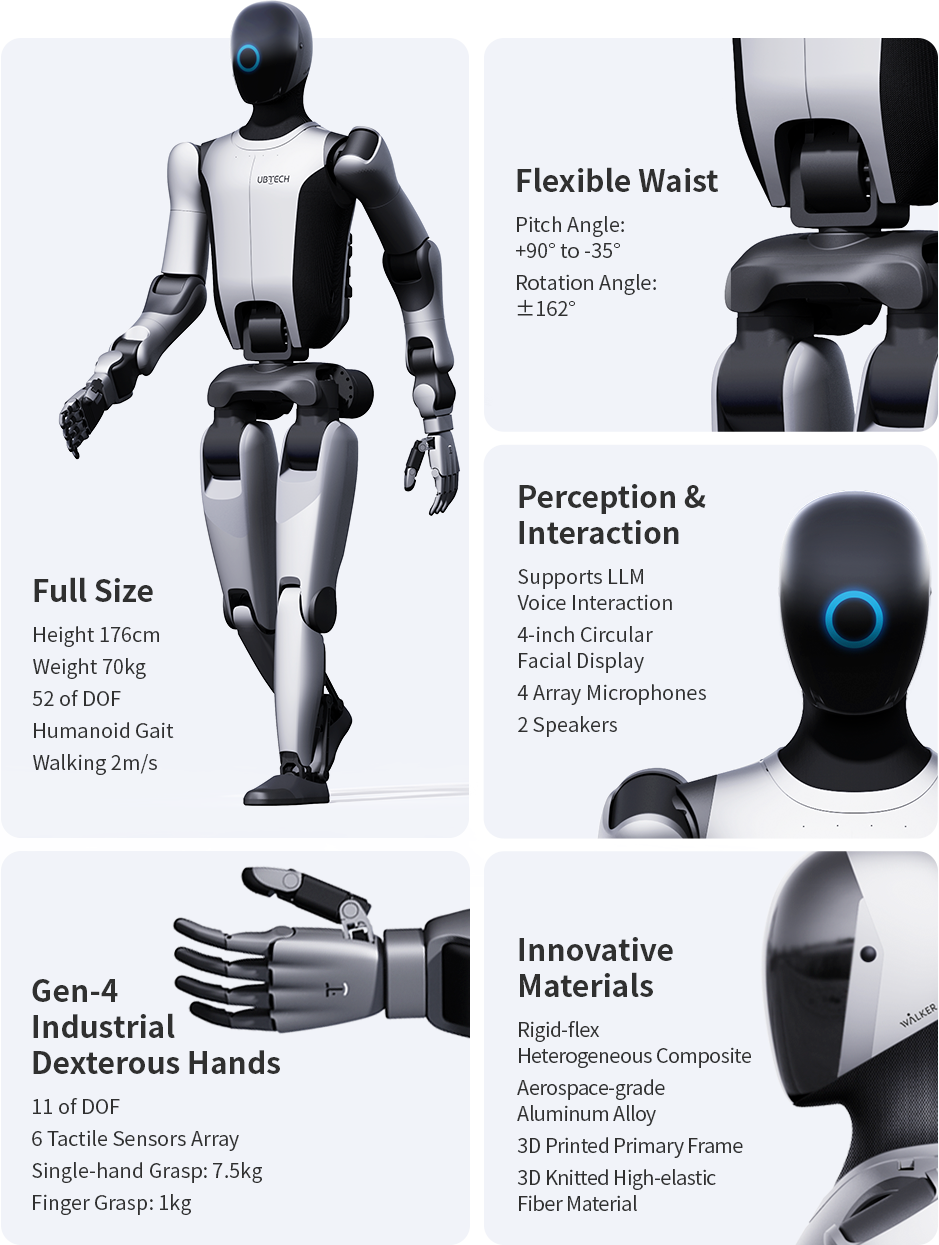

Proliferation of AI in Our Lives

State of Affairs

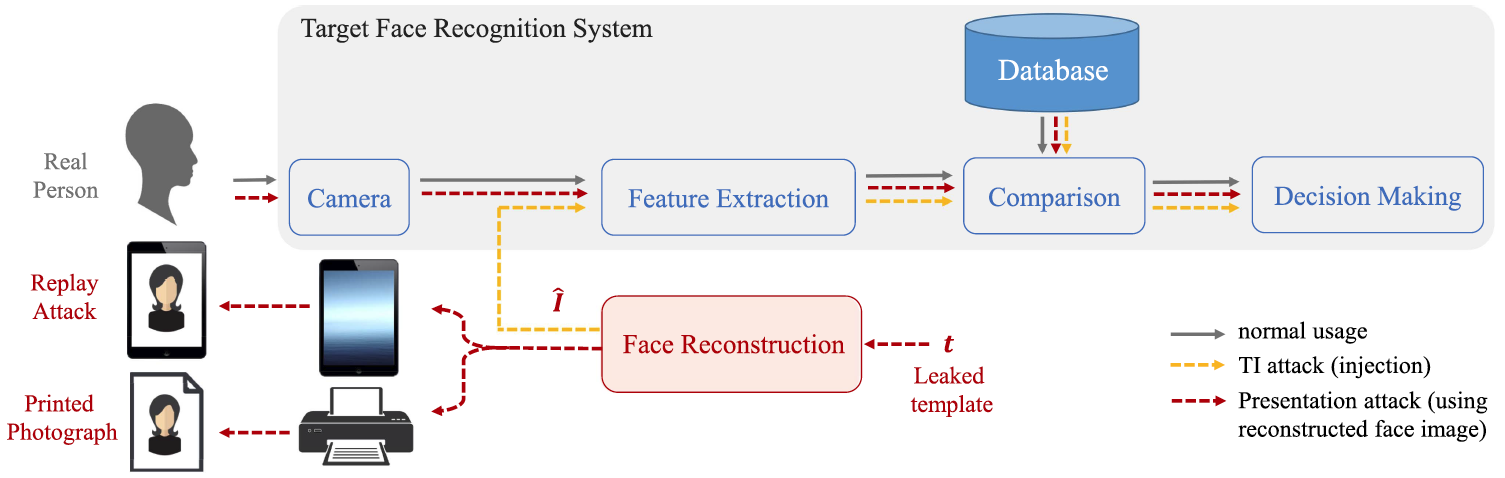

(report from the academic-world)Attacks on Face Recognition Systems

Template inversion attack enables Presentation attack

Presentation attack via digital replay and printed photograph

Attacks on Large Language Models

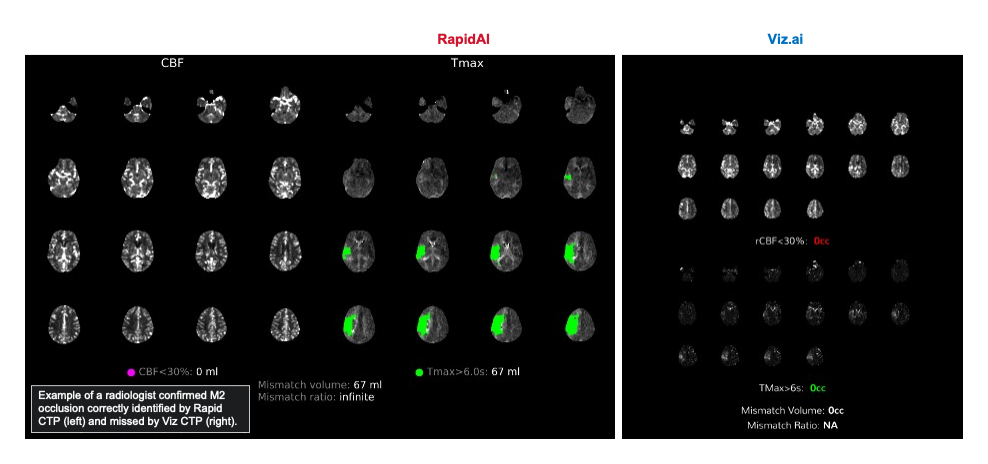

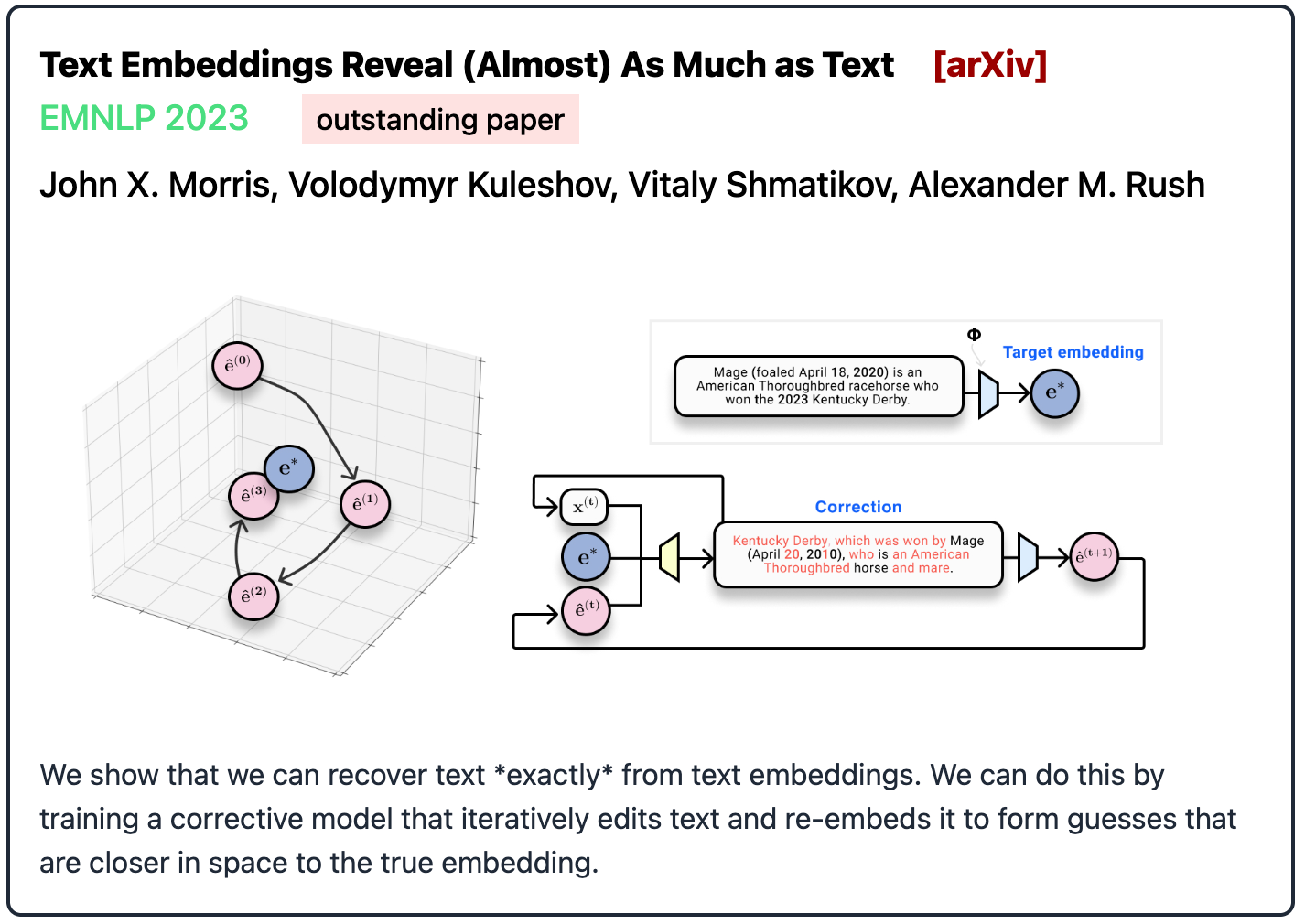

Attacks on Text Embeddings

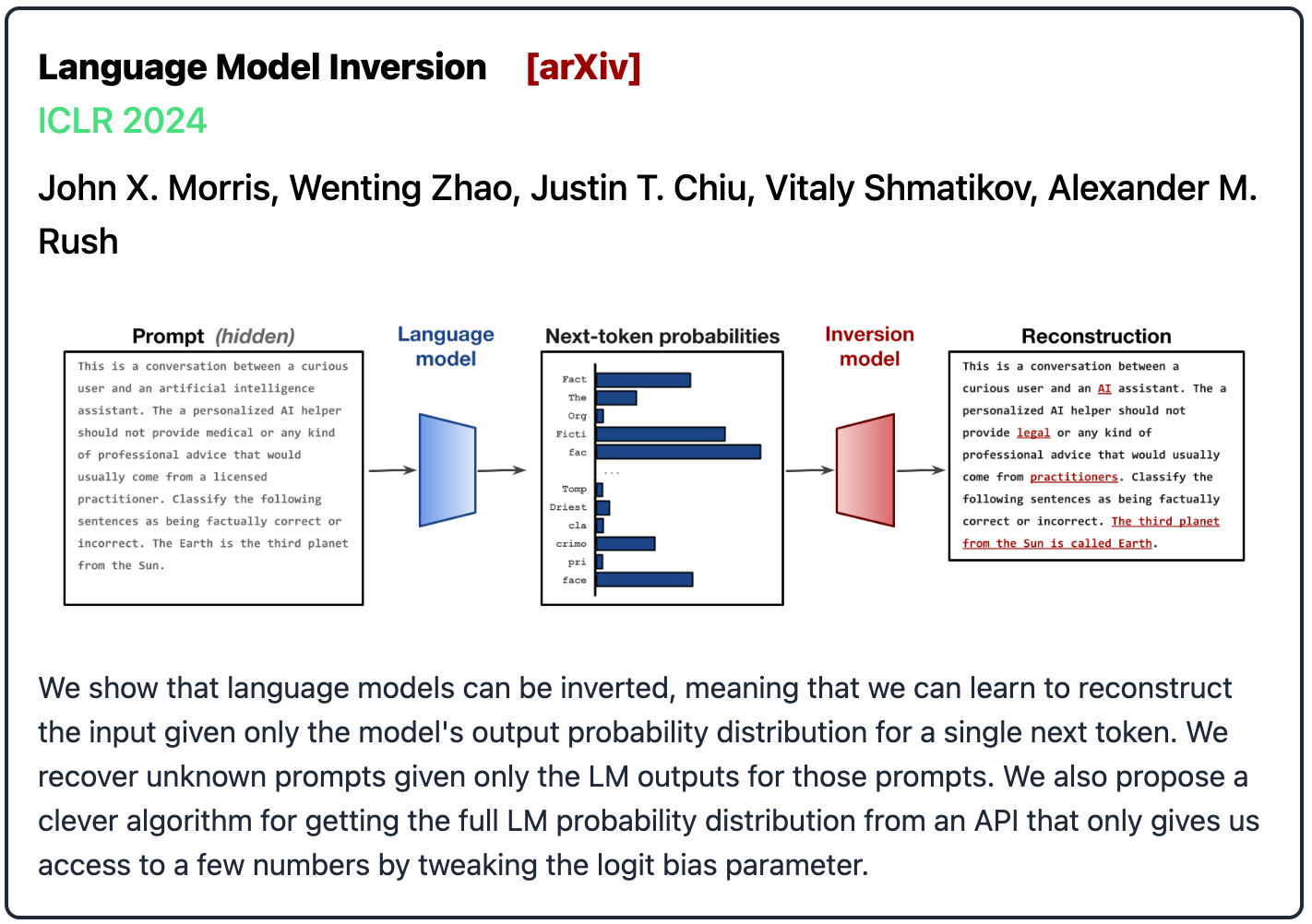

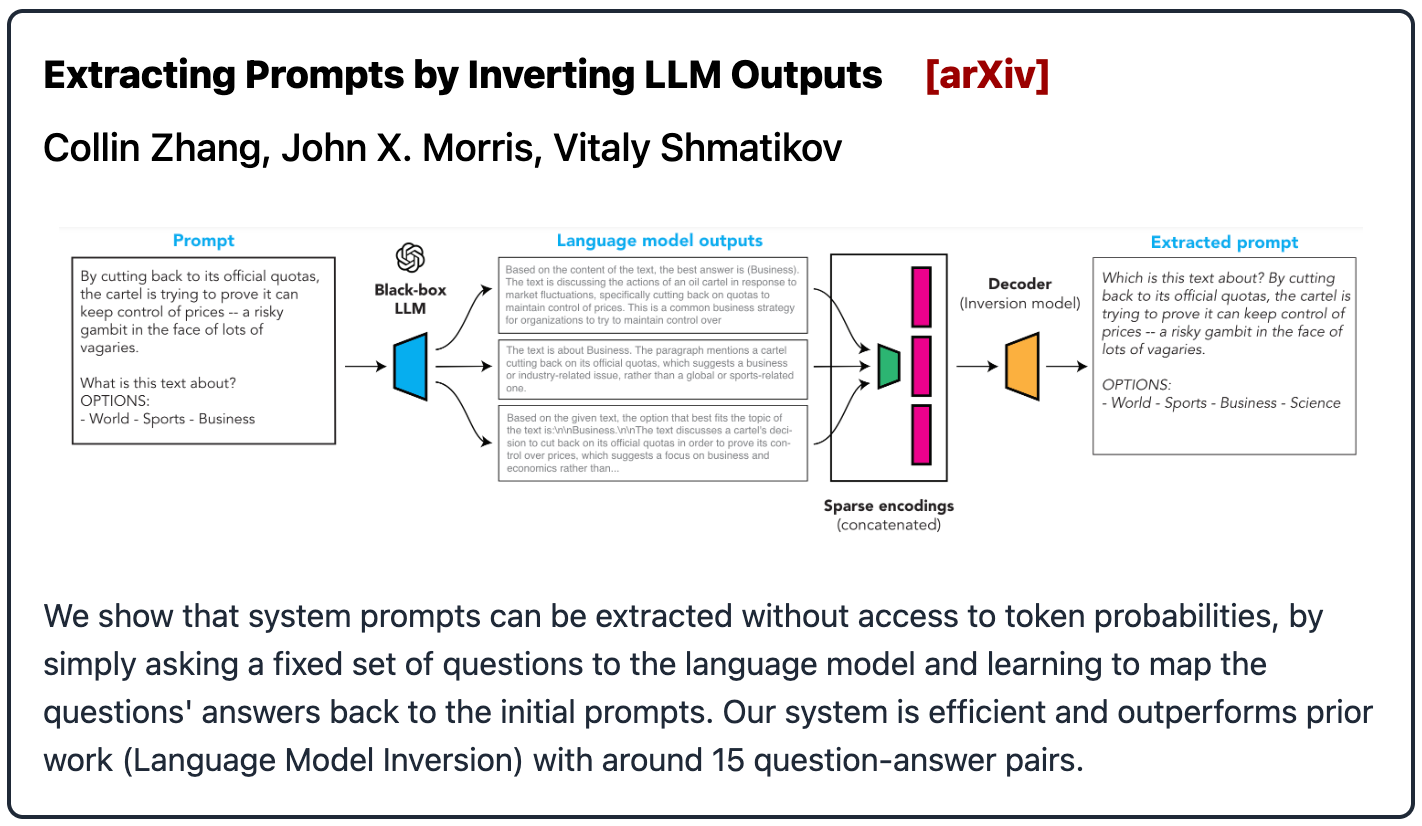

Attacks on Language Models

Attacks on User Prompts

State of Affairs

(report from the real-world)

Sep 19, 2024

Healthcare Data Breaches of 500+ Records (2009-2024)

Only going to get worse with AI Chatbots

Privacy Requirements in Healthcare

Strict regulations on data privacy in transit, rest, and use.

Privacy Enhancing Technologies

Differential Privacy

Homomorphic Encryption

Secure Multi-Party Computation

Trusted Execution Environment

Today's Agenda

What are we trying to protect in healthcare AI?

- $x$: Medical Imaging, EHR, Genomics, Voice, Identity

- $f$: Proprietary AI parameters, Diagnostic algorithms

Data Privacy

- Protect patient privacy (e.g., identity, diagnosis).

- Prevent unauthorized access to PHI.

- Build trust for data sharing.

- Comply with regulations like HIPAA.

Model Privacy

- Protect proprietary diagnostic models.

- Prevent model inversion attacks.

- Prevent reconstruction of training data (e.g., patient records).

- Maintain competitive advantage.

The blind spot of traditional encryption

Privacy of user data is not guaranteed.

Encryption Schemes

What we have.

What we want.

Is there an encryption scheme that satisfies our security desiderata?

FHE can help AI models achieve trustworthiness

- FHE is conjectured to be post-quantum secure.

FHE enables AI models to process encrypted data without decryption.

What is Fully Homomorphic Encryption?

Run programs on encrypted data without ever decrypting it.FHE can—in theory—handle universal computation.

Foundational Concepts

CKKS FHE Scheme

Making Neural Networks FHE-Friendly

FHE Foundational Concepts

Evolution of FHE schemes

Learning with Errors (LWE)

LWE problem prevents attackers from breaking FHE schemes' securityThe Hardness Foundation of LWE-Based FHE

$\mathbf{s} = \begin{bmatrix}10 \\ 82 \\ 50 \\ 51\end{bmatrix}$

\[ \begin{align} 77w + 7x + 28y + 23z &= 2859 \text{ } \nonumber \\ 21w

+ 19x + 30y + 48z &= 3508 \text{ } \nonumber \\ 4w + 24x + 33y + 38z &=

3848 \text{ } \nonumber \\ 8w + 20x + 84y + 61z &= 6225 \text{ }

\nonumber \\ \end{align} \]

\[ \begin{align} 77w + 7x + 28y + 23z &= 2859 \text{ } \nonumber \\ 21w

+ 19x + 30y + 48z &= 3508 \text{ } \nonumber \\ 4w + 24x + 33y + 38z &=

3848 \text{ } \nonumber \\ 8w + 20x + 84y + 61z &= 6225 \text{ }

\nonumber \\ \end{align} \]

\[ \begin{align} 77w + 7x + 28y + 23z &= 2859 \text{ } \nonumber \\ 21w

+ 19x + 30y + 48z &= 3508 \text{ } \nonumber \\ 4w + 24x + 33y + 38z &=

3848 \text{ } \nonumber \\ 8w + 20x + 84y + 61z &= 6225 \text{ }

\nonumber \\ \end{align} \]

\[ \begin{align} 77w + 7x + 28y + 23z &= 2859 \text{ } \color{#ff8080}{

+ \text{ } (-3)} \nonumber \\ 21w + 19x + 30y + 48z &= 3508 \text{ }

\color{#ff8080}{ + \text{ } (+2)} \nonumber \\ 4w + 24x + 33y + 38z &=

3848 \text{ } \color{#ff8080}{ + \text{ } (-1)} \nonumber \\ 8w + 20x +

84y + 61z &= 6225 \text{ } \color{#ff8080}{ \underbrace{+ \text{ }

(+0)}_{noise}}\nonumber \\ \end{align} \]

\[ \begin{align} 77w + 7x + 28y + 23z &= 2859 \text{ } \color{#ff8080}{

+ \text{ } (-3)} \nonumber \\ 21w + 19x + 30y + 48z &= 3508 \text{ }

\color{#ff8080}{ + \text{ } (+2)} \nonumber \\ 4w + 24x + 33y + 38z &=

3848 \text{ } \color{#ff8080}{ + \text{ } (-1)} \nonumber \\ 8w + 20x +

84y + 61z &= 6225 \text{ } \color{#ff8080}{ \underbrace{+ \text{ }

(+0)}_{noise}}\nonumber \\ \end{align} \]

\[ \begin{align} 77w + 7x + 28y + 23z &= 2859 \text{ } \color{#ff8080}{

+ \text{ } (-3)} \color{cyan}{\text{ (mod 89)}} \nonumber \\ 21w + 19x +

30y + 48z &= 3508 \text{ } \color{#ff8080}{ + \text{ } (+2)}

\color{cyan}{\text{ (mod 89)}} \nonumber \\ 4w + 24x + 33y + 38z &= 3848

\text{ } \color{#ff8080}{ + \text{ } (-1)} \color{cyan}{\text{ (mod

89)}} \nonumber \\ 8w + 20x + 84y + 61z &= 6225 \text{ }

\color{#ff8080}{ \underbrace{+ \text{ } (+0)}_{noise}}\text{

}\color{cyan}{\underbrace{\text{(mod 89)}}_{ring}} \nonumber \\

\end{align} \]

\[ \begin{align} 77w + 7x + 28y + 23z &= 2859 \text{ } \color{#ff8080}{

+ \text{ } (-3)} \color{cyan}{\text{ (mod 89)}} \nonumber \\ 21w + 19x +

30y + 48z &= 3508 \text{ } \color{#ff8080}{ + \text{ } (+2)}

\color{cyan}{\text{ (mod 89)}} \nonumber \\ 4w + 24x + 33y + 38z &= 3848

\text{ } \color{#ff8080}{ + \text{ } (-1)} \color{cyan}{\text{ (mod

89)}} \nonumber \\ 8w + 20x + 84y + 61z &= 6225 \text{ }

\color{#ff8080}{ \underbrace{+ \text{ } (+0)}_{noise}}\text{

}\color{cyan}{\underbrace{\text{(mod 89)}}_{ring}} \nonumber \\

\end{align} \]

Breaking FHE $\Leftrightarrow$ Solving LWE problem: recovering private key from public key.

| System | Problem | Complexity | Solution |

| $b=As$ in $\mathbb{R}$ | System of Linear Equations | P | Gaussian Elimination |

| $b=As+e$ in $\mathbb{R}$ | Least Squares Problem | P | Least Squares Estimator |

| $b=As+e \mod q$ in $\mathbb{Z}_q$ | Learning with Errors Problem | NP-hard | No known efficient algorithm (not even quantum) |

| $b(X)=A(X)s(X)+e(X) \mod q$ in $\mathbb{Z}_q[X]/(X^N+1)$ | Ring Learning with Errors Problem | NP-hard | No known efficient algorithm (not even quantum) |

Pipeline of Homomorphic Evaluation of Encrypted Data

Using CKKS scheme as an example

Encoding/Decoding

Key generation

Encryption/Decryption

Evaluation

Data Encoding and decoding

Ex: CKKS encoding operates in the cyclotomic ring $\mathbb{Z}_q[X]/(X^N+1)$

Key generation

- Example of CKKS Keys

- Private key: $sk = s(X)$

- Public key: $pk=(a(X),b(x))$

- Evaluation

keys: $evK$

- Multiplication keys for ciphertext size reduction.

- Rotation keys to enable rotate and conjugate.

- Main parameters: N and log(q)

Encryption and Decryption

Ex: CKKS ciphertext is composed of two polynomials.

- Encryption

- $\left[\!\left[ m \right]\!\right]=(c_0(X),c_1(X))$

- Decryption

- $\tilde{m}(X) = \text{round} (c_0(X) + c_1(X) \cdot s(X) \mod q) $

- Multiplicative Depth

- Ciphertexts are associated with a specific level.

FHE Schemes support basic HE capabilities

Bootstrapping enables unlimited HE operations over encrypted data

Bootstrapping is slowest of HE operations. Avoid if possible.

Data Packing and SIMD operations

Choice of packing scheme significantly affects latency.

Functions natively supported by FHE

Vector operations

Polynomial evaluation

Matrix operations

Vector operations Under Encryption

Polynomial evaluation Under Encryption

- Polynomial evaluation $$P(X) = a_0 + a_1 X + a_2 X^2 + \cdots + a_n X^n$$

- Viewed as an inner product $P(X) = <\mathbb{a}, \mathbb{X}>$

- $\mathbb{a} = (a_0, a_1, \cdots, a_n)$

- $\mathbb{X} = (1, X^1, \cdots, X^n)$

-

Encrypted polynomial evaluation

- Pt-Ct multiplication $ct = \mathbb{a} \times \left[\!\left[ \mathbb{X} \right]\!\right]$

- Ct-Ct multiplication $ct = \left[\!\left[ \mathbb{a} \right]\!\right] \times \left[\!\left[ \mathbb{X} \right]\!\right]$

Complexity

- Additions: $\log_2(n+1)-1$

- Multiplications: $1$

- Rotations: $\log_2(n+1)$

Latency

Low latency that depends on $n$.

Applications

Matrix operations Under Encryption

Matrix-vector multiplication

Complexity

- Additions: $2$

- Multiplications: $n$ rows

- Rotations: $3$

Latency

Medium latency that depends on the matrix dimension.

Applications

Matrix operations Under Encryption

Matrix-Matrix multiplication

Complexity

- Additions: $2$

- Multiplications: $n \times m$

- Rotations: $0$

Latency

High latency that depends on the dimensions of the matrices.

Application

Story So Far...

Right security parameters

Adequate packing scheme

Minimize #Multiplications and #Rotations

Apply bootstrapping to avoid exhausting ciphertexts

Primitive mathematical operations are feasible under encryption.

Applying FHE to AI

What are the challenges for applying FHE to AI?

- FHE libraries operate at a low-level and are not user friendly.

- FHE is computationally expensive (10,000x slower than standard computation).

- Standard non-linear layers are not natively supported (e.g., ReLU, MaxPool).

- Needs both cryptography and AI expertise.

User Friendly Software for Encrypted AI

From PyTorch to FHE Inference

Neural Architecture

Trained Weights

Orion

import orion

net = ResNet50()

orion.fit(net, trainloader)

orion.compile()

net.he()

ctOut = net(ctIn)Adapting AI Models for FHE Computation

how to Adapt CNNs for FHE

- Supported one-dimensional operations under FHE:

- Multiplication

- Addition

- Rotation

Polynomial approximation for non-linear activations

Polynomial Approximation for non-linear activations

- High-degree approximation

- Slow: more multiplications, more bootstrappings

- Accurate: high-degree polynomials

- Training/fine-tuning: not necessary

- Low-degree approximation

- Fast: less multiplications, less bootstrappings

- Not Accurate: low-degree polynoimals

- Training/fine-tuning: necessary

Co-design CNNs and FHE systems

- Security Requirement

Encryption Parameters

- Cyclotomic polynomial degree: $N$

- Level: $L$

- Modulus: $Q_l=\prod_{i=0}^{l} q_l, 0 \leq q_l \leq L$

- Bootstrapping Depth: $K$

- Hamming Weight: $h$

- Latency

- Prediction Accuracy

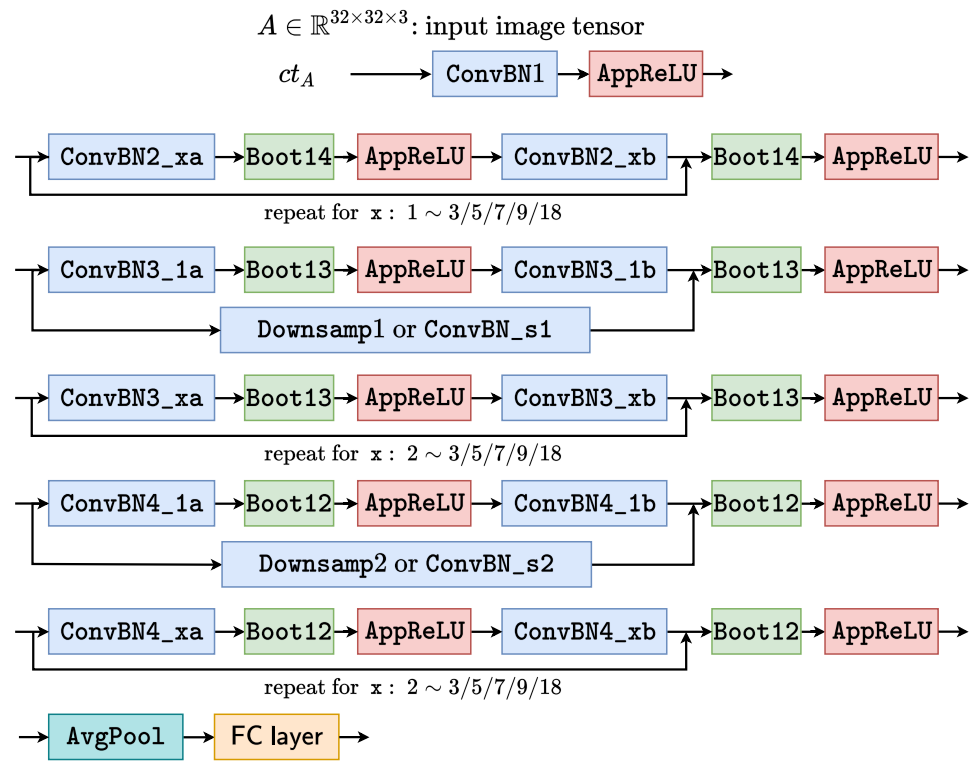

Polynomial CNNs

- Conv, BN, pooling, FC layers: packing

- Polynomials: degree -> depth

- Number of layers: ResNet20, ResNet32

- Input image resolution

- Channels/kernels

space of Homomorphic neural Architectures

How to effectively trade-off between accuracy and latency?

Limitations of handcrafted architectures

Manually Designed FHE Evaluation Architecture

- Precise approximation of ReLU function.

- Same ReLU approximation is used for all layers.

- Every ResBlock has bootstrapping layer.

- Evaluation architecture specialized for ResNets.

Joint Search for Layerwise EvoReLU and Bootstrapping Operations

- Flexible Architecture

- On demand Bootstrapping

Experimental Setup

Dataset: CIFAR10

- 50,000 training images

- 10,000 test images

- 32x32 resolution, 10 classes

Hardware & Software

- Amazon AWS, r5.24xlarge

- 96 CPUs, 768 GB RAM

- Microsoft SEAL, 3.6

Latency and Accuracy Trade-offs under FHE

| Approach | MPCNN | AESPA | REDsec | AutoFHE |

|---|---|---|---|---|

| Venue | ICML22 | arXiv22 | NDSS23 | USENIX24 |

| Scheme | CKKS | CKKS | TFHE | CKKS |

| Polynomial | high | low | n/a | mixed |

| Layerwise | No | No | n/a | Yes |

| Strategy | approx | train | train | adapt |

| Architecture | manual | manual | manual | search |

- MPCNN: Low-Complexity Convolutional Neural Networks on Fully Homomorphic Encryption Using Multiplexed Parallel Convolutions, ICML 2022

- AESPA: Accuracy Preserving Low-degree Polynomial Activation for Fast Private Inference, arXiv 2022

- REDsec: Running Encrypted Discretized Neural Networks in Seconds, NDSS 2023

- AutoFHE: Automated Adaption of CNNs for Efficient Evaluation over FHE, USENIX Security 2024

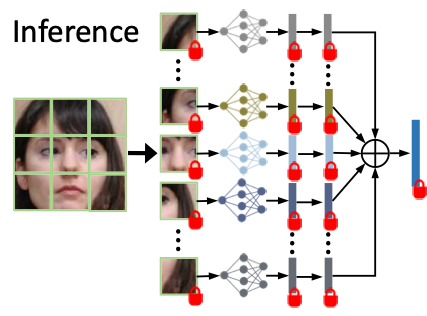

Practical Application: End-to-End Encrypted Face Recognition

CryptoFace: End-to-End Encrypted Face Recognition

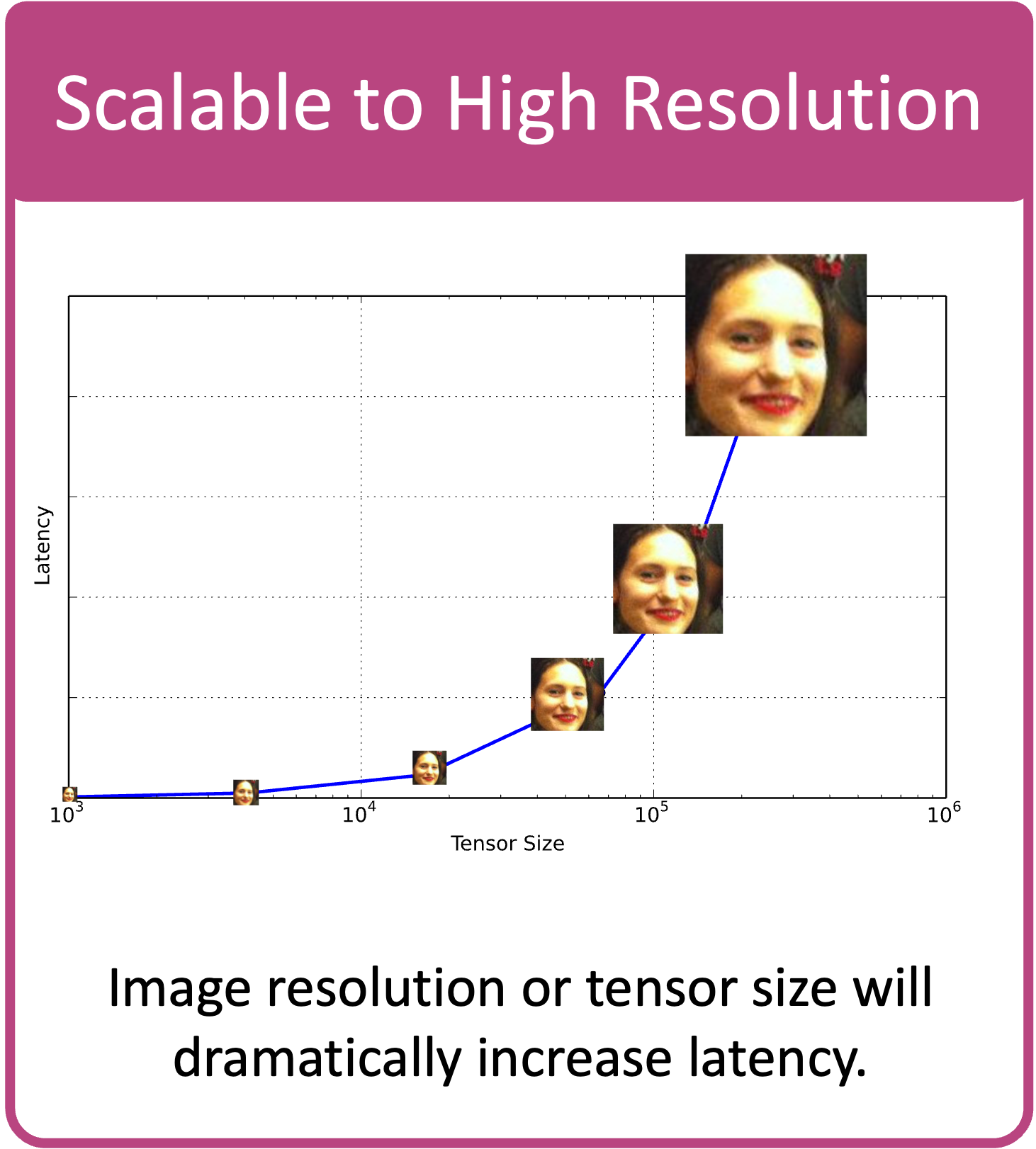

Mixture of Shallow Patch CNNs

- Shallow patch CNNs (PCNNs) consume less levels.

- The mixture of PCNNs can be evaluated in parallel under FHE.

Co-designing of neural architecture and FHE system.

Encrypted Face Recognition Evaluation

Hardware & Software

- Amazon AWS, r5.24xlarge

- 96 CPUs, 768 GB RAM

- Orion (w/ Lattigo)

Encrypted Face Recognition Evaluation

| Approach | Resolution | Backbone | 5 Datasets | Latency(s) | Memory(GB) | ||

|---|---|---|---|---|---|---|---|

| Network | Params | Boot | Average Accuracy1 | ||||

| MPCNN | 64x64 | ResNet32 | 0.53M | 31 | 85.60 | 1,277 | 286 |

| 64x64 | ResNet44 | 0.73M | 43 | 89.64 | 1,640 | 286 | |

| AutoFHE | 64x64 | ResNet32 | 0.53M | 8 | 82.69 | 667 | 286 |

| CryptoFace | 64x64 | CryptoFaceNet4 | 0.94M | 2 | 89.42 | 220 | 269 |

| CryptoFace | 96x96 | CryptoFaceNet9 | 2.12M | 2 | 90.99 | 232 | 276 |

| CryptoFace | 128x128 | CryptoFaceNet16 | 3.78M | 2 | 91.46 | 241 | 277 |

- Average Accuracy: the average one-to-one verification accuracy across five face datasets, ie LFW, AgeDB, CALFW, CPLFW, CFP-FP↩

7.5x speedup (27 mins → 3.6 mins), while preserving accuracy (89.64 vs 89.42)

Near-constant latency across different resolutions

Practical Application: End-to-End Encrypted LLM

End-to-End Encrypted LLMs

Closed-source implementations only.

Practical Application: End-to-End Encrypted SecureRAG

SecureRAG: End-to-End Secure Retrieval-Augmented Generation

Story So Far...

Co-designing AI and FHE architectures is critical for efficiency.

Missing Piece in the Puzzle

Hardware Accelerators for FHE

Concluding Remarks

Key Takeaways

- Secure Healthcare AI is achievable with Fully Homomorphic Encryption (FHE).

- Efficient and specialized architectures are crucial for practical encrypted inference.

- Real-world applications, like secure facial recognition, secure LLMs and genomic analysis, demonstrate feasibility today.

Next Steps

- Explore our open-source code & tutorials. (fhe4cv.github.io - CVPR 2025 Tutorial)

- Stay connected through community resources. (fhe.org Discord)

- Collaborate on future research challenges! (vishnu@msu.edu)

We appreciate your interest. Let's advance secure healthcare AI together.