NSGANetV2: Evolutionary Multi-Objective Surrogate-Assisted Neural Architecture Search [slides][arXiv]

@inproceedings{

lu2020nsganetv2,

title={NSGANetV2: Evolutionary Multi-Objective Surrogate-Assisted Neural Architecture Search},

author={Zhichao Lu and Kalyanmoy Deb and Erik Goodman and Wolfgang Banzhaf and Vishnu Naresh Boddeti},

booktitle={European Conference on Computer Vision (ECCV)},

year={2020}

}

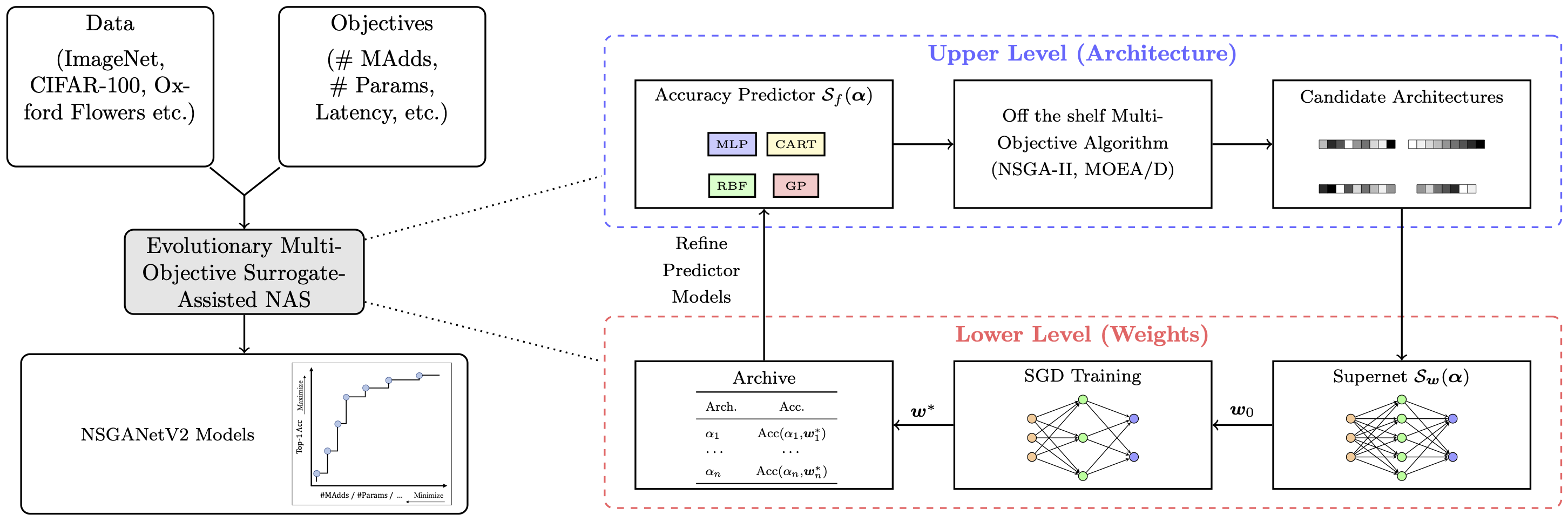

Overview

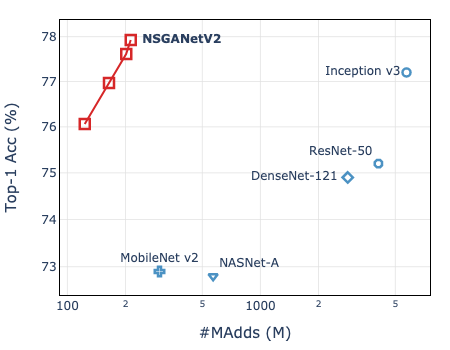

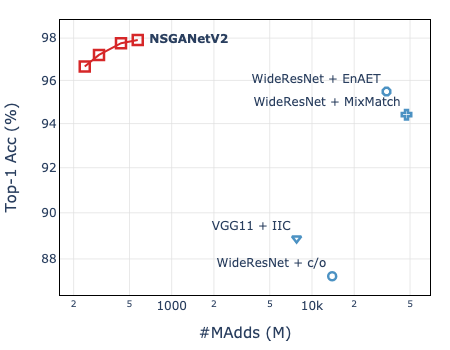

NSGANetV2 is an efficient NAS algorithm for generating task-specific models that are competitive under multiple competing objectives. It comprises of two surrogates, one at the architecture level to improve sample efficiency and one at the weights level, through a supernet, to improve gradient descent training efficiency.

NSGANetV2 is an efficient NAS algorithm for generating task-specific models that are competitive under multiple competing objectives. It comprises of two surrogates, one at the architecture level to improve sample efficiency and one at the weights level, through a supernet, to improve gradient descent training efficiency.

Datasets

Download the datasets from the links embedded in the names. Datasets with * can be automatically downloaded.

| Dataset | Type | Train Size | Test Size | #Classes |

|---|---|---|---|---|

| ImageNet | multi-class | 1,281,167 | 50,000 | 1,000 |

| CINIC-10 | 180,000 | 9,000 | 10 | |

| CIFAR-10* | 50,000 | 10,000 | 10 | |

| CIFAR-100* | 50,000 | 10,000 | 10 | |

| STL-10* | 5,000 | 8,000 | 10 | |

| FGVC Aircraft* | fine-grained | 6,667 | 3,333 | 100 |

| DTD | 3,760 | 1,880 | 47 | |

| Oxford-IIIT Pets | 3,680 | 3,369 | 37 | |

| Oxford Flowers102 | 2,040 | 6,149 | 102 |

How to evalute NSGANetV2 models

Download the models (net.config) and weights (net.init) from [Google Drive] or [Baidu Yun](提取码:4isq).

""" NSGANetV2 pretrained models

Syntax: python validation.py \

--dataset [imagenet/cifar10/...] --data /path/to/data \

--model /path/to/model/config/file --pretrained /path/to/model/weights

"""

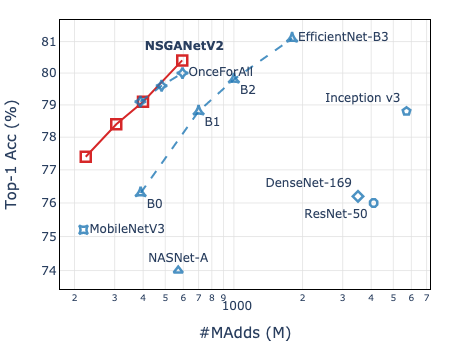

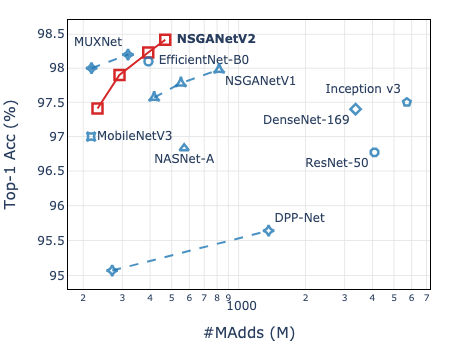

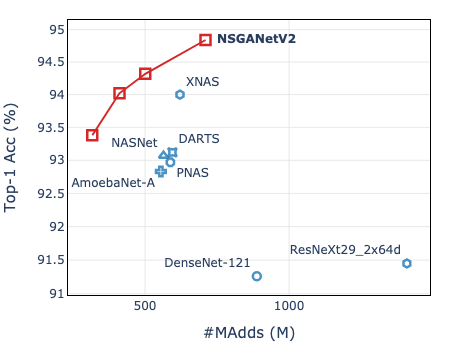

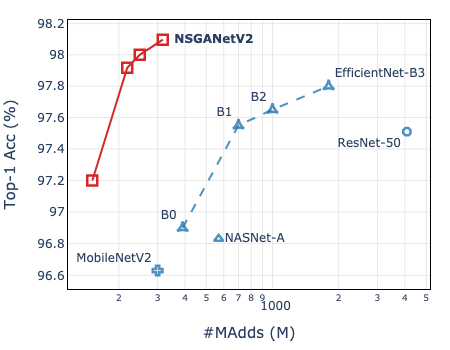

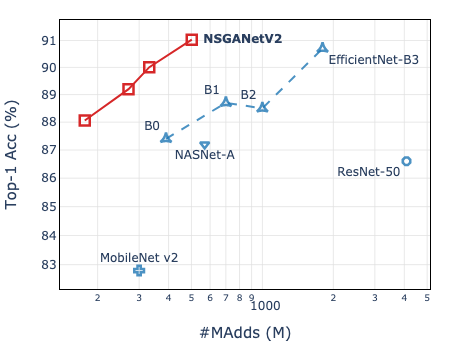

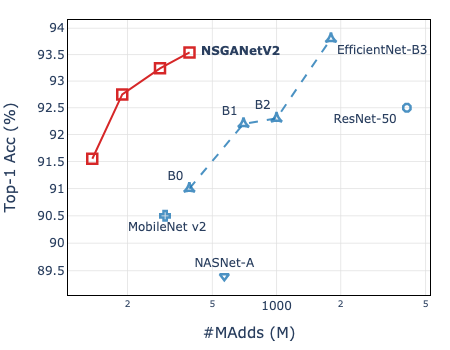

ImageNet | CIFAR-10 | CINIC10

:————————-:|:————————-:|:————————-:

|

|  |

|  FLOPs@225: [Google Drive]

FLOPs@225: [Google Drive]

FLOPs@312: [Google Drive]

FLOPs@400: [Google Drive]

FLOPs@593: [Google Drive] | FLOPs@232: [Google Drive]

FLOPs@291: [Google Drive]

FLOPs@392: [Google Drive]

FLOPs@468: [Google Drive] | FLOPs@317: [Google Drive]

FLOPs@411: [Google Drive]

FLOPs@501: [Google Drive]

FLOPs@710: [Google Drive]

| Flowers102 | Aircraft | Oxford-IIIT Pets |

|---|---|---|

|

|

|

| FLOPs@151: [Google Drive] FLOPs@218: [Google Drive] FLOPs@249: [Google Drive] FLOPs@317: [Google Drive] |

FLOPs@176: [Google Drive] FLOPs@271: [Google Drive] FLOPs@331: [Google Drive] FLOPs@502: [Google Drive] |

FLOPs@137: [Google Drive] FLOPs@189: [Google Drive] FLOPs@284: [Google Drive] FLOPs@391: [Google Drive] |

| CIFAR-100 | DTD | STL-10 |

|---|---|---|

|

|

|

| FLOPs@261: [Google Drive] FLOPs@398: [Google Drive] FLOPs@492: [Google Drive] FLOPs@796: [Google Drive] |

FLOPs@123: [Google Drive] FLOPs@164: [Google Drive] FLOPs@202: [Google Drive] FLOPs@213: [Google Drive] |

FLOPs@240: [Google Drive] FLOPs@303: [Google Drive] FLOPs@436: [Google Drive] FLOPs@573: [Google Drive] |

How to use MSuNAS to search

""" Bi-objective search

Syntax: python msunas.py \

--dataset [imagenet/cifar10/...] --data /path/to/dataset/images \

--save search-xxx \ # dir to save search results

--sec_obj [params/flops/cpu] \ # objective (in addition to top-1 acc)

--n_gpus 8 \ # number of available gpus

--supernet_path /path/to/supernet/weights \

--vld_size [10000/5000/...] \ # number of subset images from training set to guide search

--n_epochs [0/5]

"""

- Download the pre-trained (on ImageNet) supernet from here.

- It supports searching for FLOPs, Params, and Latency as the second objective.

- To optimize latency on your own device, you need to first construct a

look-up-tablefor your own device, like this.

- To optimize latency on your own device, you need to first construct a

- Choose an appropriate

--vld_sizeto guide the search, e.g. 10,000 for ImageNet, 5,000 for CIFAR-10/100. - Set

--n_epochsto0for ImageNet and5for all other datasets. - See here for some examples.

- Output file structure:

- Every architecture sampled during search has

net_x.subnetandnet_x.statsstored in the corresponding iteration dir. - A stats file is generated by the end of each iteration,

iter_x.stats; it stores every architectures evaluated so far in["archive"], and iteration-wise statistics, e.g. hypervolume in["hv"], accuracy predictor related in["surrogate"]. - In case any architectures failed to evaluate during search, you may re-visit them in

failedsub-dir under experiment dir.

- Every architecture sampled during search has

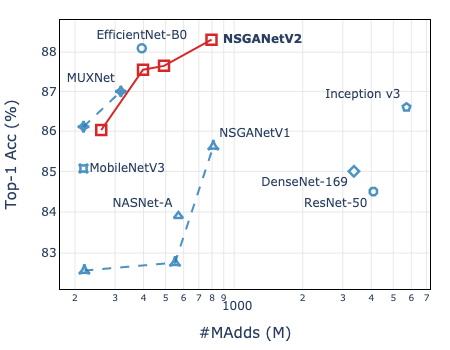

| ImageNet | CIFAR-10 |

|---|---|

|

|

How to choose architectures

Once the search is completed, you can choose suitable architectures by:

- You have preferences, e.g. architectures with xx.x% top-1 acc. and xxxM FLOPs, etc.

""" Find architectures with objectives close to your preferences Syntax: python post_search.py \ -n 3 \ # number of desired architectures you want, the most accurate archecture will always be selected --save search-imagenet/final \ # path to the dir to store the selected architectures --expr search-imagenet/iter_30.stats \ # path to last iteration stats file in experiment dir --prefer top1#80+flops#150 \ # your preferences, i.e. you want an architecture with 80% top-1 acc. and 150M FLOPs --supernet_path /path/to/imagenet/supernet/weights \ """ - If you do not have preferences, pass

Noneto argument--prefer, architectures will then be selected based on trade-offs. - All selected architectures should have three files created:

net.subnet: use to sample the architecture from the supernetnet.config: configuration file that defines the full architectural componentsnet.inherited: the inherited weights from supernet

How to validate architectures

To realize the full potential of the searched architectures, we further fine-tune from the inherited weights. Assuming that you have both net.config and net.inherited files.

""" Fine-tune on ImageNet from inherited weights

Syntax: sh scripts/distributed_train.sh 8 \ # of available gpus

/path/to/imagenet/data/ \

--model [nsganetv2_s/nsganetv2_m/...] \ # just for naming the output dir

--model-config /path/to/model/.config/file \

--initial-checkpoint /path/to/model/.inherited/file \

--img-size [192, ..., 224, ..., 256] \ # image resolution, check "r" in net.subnet

-b 128 --sched step --epochs 450 --decay-epochs 2.4 --decay-rate .97 \

--opt rmsproptf --opt-eps .001 -j 6 --warmup-lr 1e-6 \

--weight-decay 1e-5 --drop 0.2 --drop-path 0.2 --model-ema --model-ema-decay 0.9999 \

--aa rand-m9-mstd0.5 --remode pixel --reprob 0.2 --amp --lr .024 \

--teacher /path/to/supernet/weights \

"""

- Adjust learning rate as

(batch_size_per_gpu * #GPUs / 256) * 0.006depending on your system config.""" Fine-tune on CIFAR-10 from inherited weights Syntax: python train_cifar.py \ --data /path/to/CIFAR-10/data/ \ --model [nsganetv2_s/nsganetv2_m/...] \ # just for naming the output dir --model-config /path/to/model/.config/file \ --img-size [192, ..., 224, ..., 256] \ # image resolution, check "r" in net.subnet --drop 0.2 --drop-path 0.2 \ --cutout --autoaugment --save """

More Use Cases (coming soon)

- With a different supernet (search space).

- NASBench 101/201.

- Architecture visualization.

Requirements

- Python 3.7

- Cython 0.29 (optional; makes

non_dominated_sortingfaster in pymoo) - PyTorch 1.5.1

- pymoo 0.4.1

- torchprofile 0.0.1 (for FLOPs calculation)

- OnceForAll 0.0.4 (lower level supernet)

- timm 0.1.30

- pySOT 0.2.3 (RBF surrogate model)

- pydacefit 1.0.1 (GP surrogate model)