Abstract

Metrics like disk activity and network traffic are widespread sources of diagnosis and monitoring information in datacenters and networks. However, as the scale of these systems increases, examining the raw data yields diminishing insight. We present RainMon, a novel end-to-end approach for mining timeseries monitoring data designed to handle its size and unique characteristics. Our system is able to (a) mine large, bursty, real-world monitoring data, (b) find significant trends and anomalies in the data, (c) compress the raw data effectively, and (d) estimate trends to make forecasts. Furthermore, RainMon integrates the full analysis process from data storage to the user interface to provide accessible long-term diagnosis. We apply RainMon to three real-world datasets from production systems and show its utility in dis- covering anomalous machines and time periods.

Overview

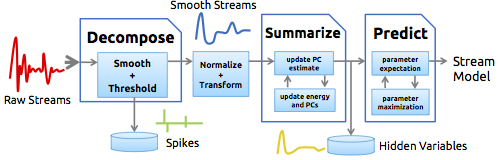

Timeseries data are prevalent in large-scale computing centers. Systems often capture sampled metrics of performance, utilization, and even sensor data like temperature. These streams are used for monitoring, placement, optimization, and more. RainMon is a framework to manage massive data-center timeseries streams that are lengthy and bursty in nature. It uses a multi-stage modeling approach. In the first phase, the incoming data streams are decomposed into “smooth” and “spiky” components. In the second phase, the streams are summarized into a set that can be visualized and understood. In the third phase, predictions are made about the future state of the system. The framework incorporates several existing algorithms from the literature including Cypress, SPIRIT and Kalman filters. RainMon has been applied to large data streams collected from production clusters to detect real anomalies.

Contributions

- Detecting potential anomalies or alert conditions. Dramatic changes in model parameters can be predictors of problems or abnormalities.

- Reduce the storage requirement of streams through compression. By storing only the model parameters and occasional original data points, potentially large timeseries data can be effectively summarized.

- Improve alerting and placement algorithms by predicting future usage. Even a short-term view of future usage can be valuable for decision-making. By playing the model forward, we can obtain this data.

References

- Ilari Shafer, Kai Ren, Vishnu Naresh Boddeti, Yoshihisa Abe, Gregory R. Ganger and Christos Faloutos , RainMon: An Integrated Approach to Mining Bursty Timeseries Monitoring Data, 18th ACM SIGKDD Conference on Knowledge Discovery and Data Mining (KDD), 2012 (Oral)