Situational Awareness

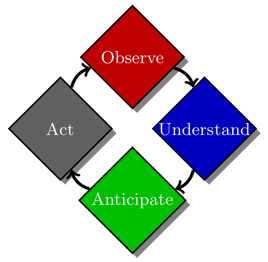

The vision of this project is to develop solutions for automated processing of visual sensor data with the goal of endowing visual systems with the ability to be situationally aware of their surroundings. Being truly perceptive of one’s environment from visual cues is a multi-layered process consisting of the following tasks, (1) observe the scene, i.e., localize and recognize the various agents in the environment, (2) understand the scene, i.e., infer fine-grained attributes of each agent such as 3D location, 3D pose, semantic segmentation masks, occlusion masks etc. and the geometric and functional relationship of agent-agent and agent-environment attributes, (3) anticipate the future evolution of the environment i.e., predict the likely transformation of the scene based on the functional understanding of the environment and the temporal understanding of the agent’s attributes, and (4) respond to the environment and take an appropriate action, i.e., based on the current state of the environment and its likely evolution, take an appropriate action to maximize our ability to truly perceive the environment.

Situational Awareness Overview

Illustration of the various tasks in the situational awareness pipeline and how they are related to each other.

Illustration of the various tasks in the situational awareness pipeline and how they are related to each other.

Pedestrian Detection

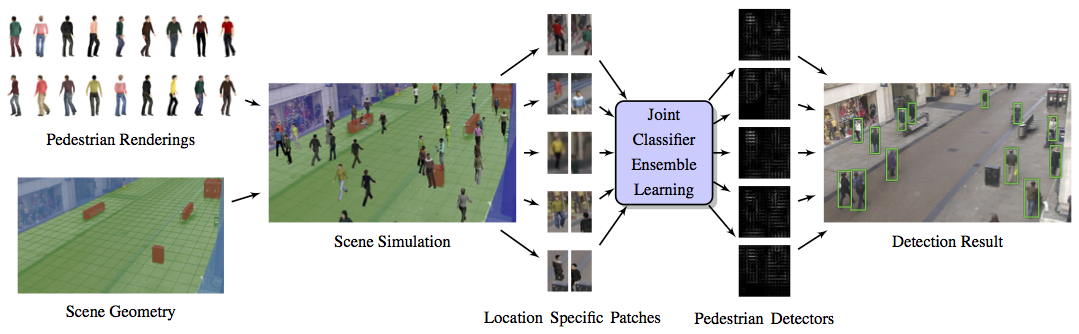

For every grid location, geometrically correct renderings of pedestrian are synthetically generated using known scene information such as camera calibration parameters, obstacles (red), walls (blue) and walkable areas (green). All location-specific pedestrian detectors are trained jointly to learn a smoothly varying appearance model. Multiple scene-and-location-specific detectors are run in parallel at every grid location.

For every grid location, geometrically correct renderings of pedestrian are synthetically generated using known scene information such as camera calibration parameters, obstacles (red), walls (blue) and walkable areas (green). All location-specific pedestrian detectors are trained jointly to learn a smoothly varying appearance model. Multiple scene-and-location-specific detectors are run in parallel at every grid location.

Pose Estimation

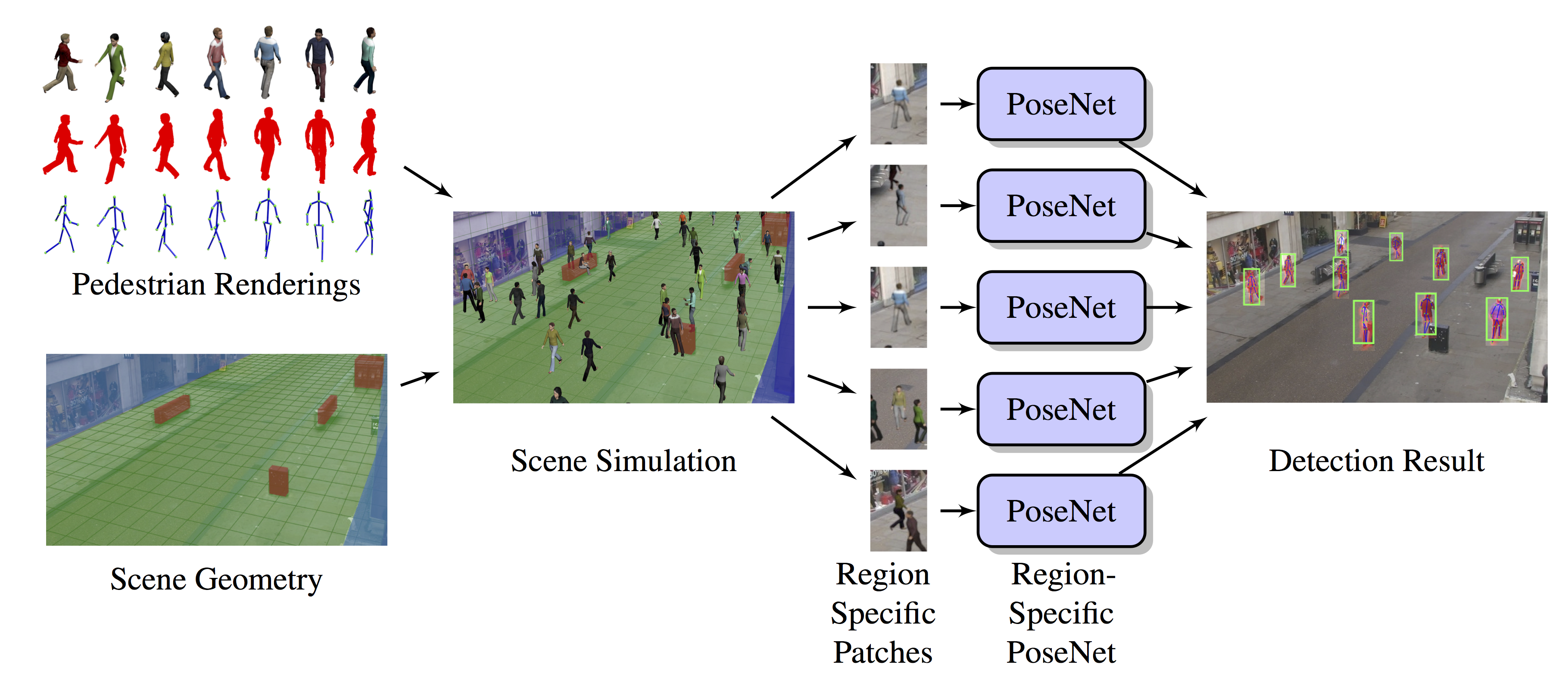

Inferring Visual Attributes through Synthesis: For every small region, physically grounded and geometrically correct renderings of pedestrian are synthetically generated using known scene information such as camera calibration parameters, obstacles (red), walls (blue) and walkable areas (green). We train environment-and-region specific ShapeNets on the synthetically generated data. At inference, from each image our model will output detections, keypoint locations, occlusion labels and segmentation mask.

Inferring Visual Attributes through Synthesis: For every small region, physically grounded and geometrically correct renderings of pedestrian are synthetically generated using known scene information such as camera calibration parameters, obstacles (red), walls (blue) and walkable areas (green). We train environment-and-region specific ShapeNets on the synthetically generated data. At inference, from each image our model will output detections, keypoint locations, occlusion labels and segmentation mask.

Contributions

- The first work to learn a scene-specific location-specific geometry-aware pedestrian detection model using purely synthetic data.

- An efficient and scalable algorithm for learning a large number of scene-specific location-specific pedestrian detectors.

References

-

Hironori Hattori, Namhoon Lee, Vishnu Naresh Boddeti, Fares Beainy, Kris M Kitani and Takeo Kanade, Synthesizing a Scene-Specific Pedestrian Detector and Pose Estimator for Static Video Surveillance International Journal of Computer Vision (IJCV) 2018

-

Hironori Hattori, Vishnu Naresh Boddeti, Kris Kitani and Takeo Kanade, Learning Scene-Specific Pedestrian Detectors without Real Data (Supplementary Material) (Extended One-Page Abstract), CVPR 2015